Table of Contents

SSMalloc: A Low-latency, Locality-conscious Memory Allocator with Stable Performance Scalability

The Problems

The efficiency of memory allocator is a key factor affecting application performance. However, most state-of-art memory allocators only manages to scale well with a small number of application threads. They cannot maintain stable scalability and locality when the number of threads exceeds the number of cores, which may be common in nowadays software. We believe that performance stability is equally important to the above three factors as it can provide more predictable performance for many applications requiring frequent memory allocation. The design space of memory allocators for many-thread application on many-core system merits further investigation.

Approaches

The most important design goal of SSMalloc is stable scalability. Hence, SSMalloc should be designed as lock-free. However, pure lock-free memory allocator is impossible in nowadays operating system. Multiple threads that perform VM management operations (e.g. mmap, munmap) simultaneously will contend in kernel. In the OS kernel, these operations are usually be executed with a global lock held. The design of SSMalloc strictly limited the number of VM management system calls, in which way most of the possible kernel level contention are avoided. Another requirement is low and predictable latency.

To achieve predictable latency, SSMalloc is designed to be nearly-wait-free. Use of loops is strictly limited in lock-free synchronizations. The other part of the algorithm could be done in bounded steps, where the critical path of SSMalloc is very short.

To achieve stable cache locality, all the data structure are carefully arranged. Fields frequently used together locally are grouped into the same cache line. Fields that may be read or write by multiple threads are seprarated in different cache lines to avoid false sharing. To present self-virtualization technique, we need both static alternations and runtime supports.

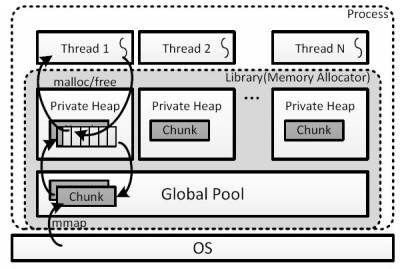

Here is the figure of the overall architecture of SSMalloc.

SSMalloc maintains a private heap for each application thread. A thread operates on its own heap for most requests, thus eliminates most of the synchronization. Each private heap holds several fixed-size memory chunks. A memory chunk contains many objects of the same size class.

When the thread private heap space is insufficient to fulfill a subsequent request, a free memory chunk is fetched from a global pool. If the thread private heap holds too many free memory chunks, some chunks will be reclaimed back to the global pool. Most of the operations on global pool is done purely by lock-free operations except for global pool enlargement. The global pool is enlarged exponentially each time, in this way time spent in mmap calls could be greatly reduced.

Results

Experiments using a number of commonly used allocation-benchmarks running on a 48-core machines show that for most cases SSMalloc outperforms prior systems in allocation latency and access locality, and provides more stable and scalable performance.

Here is some experimental results.

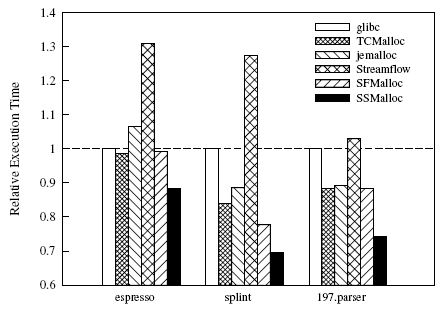

Sequential Performance

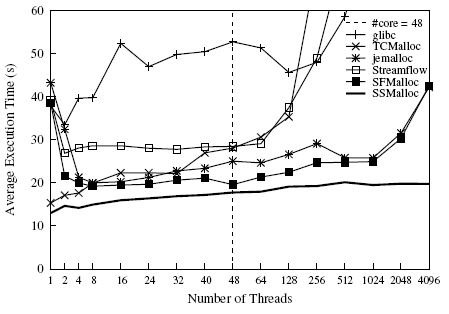

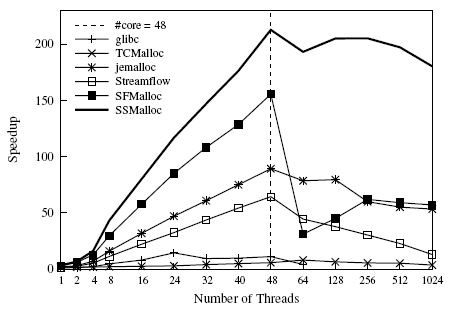

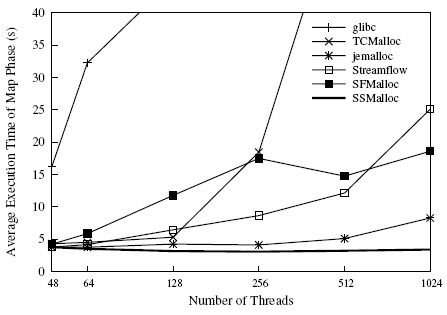

Multi-thread Scalability and Stableness

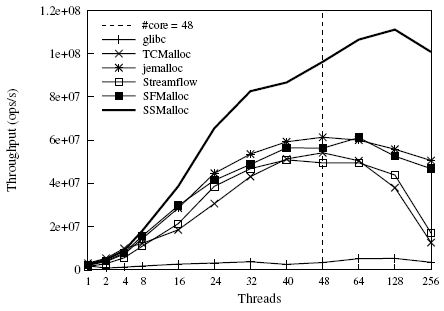

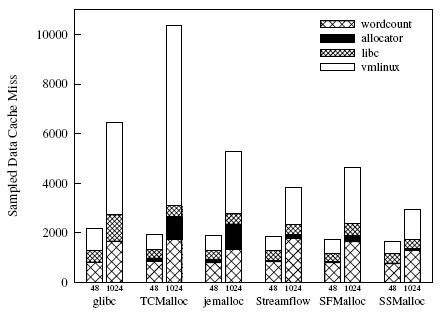

Locality Stableness

Summary

We have proposed an memory allocator SSMalloc. SSMalloc explored the design space of memory allocator for many-thread programs on many-core system. It simutaneously provides very low and predicatable latency, stable high scalability, and stable cache locality. We have evaluated SSMalloc using 7 different workloads. Exprimental results show that SSMalloc have major performance advantage with sequential workloads and scale much better with many threads than previous memory allocators. SSMalloc also provide stable cache locality with many threads.

Publications

- Ran Liu and Haibo Chen. SSMalloc: A Low-latency, Locality-conscious Memory Allocator with Stable Performance Scalability. In Proceedings of 3rd ACM SIGOPS Asia-Pacific Workshop on Systems (APSys 2012), Seoul, Korea. [pdf]